Monday, 2 March 2026

Over $200 billion to be infused in creating AI-related infra in India

Friday, 6 February 2026

AI is coming to Olympic judging: what makes it a game changer?

Willem Standaert, Université de Liège

As the International Olympic Committee (IOC) embraces AI-assisted judging, this technology promises greater consistency and improved transparency. Yet research suggests that trust, legitimacy, and cultural values may matter just as much as technical accuracy.

The Olympic AI agenda

In 2024, the IOC unveiled its Olympic AI Agenda, positioning artificial intelligence as a central pillar of future Olympic Games. This vision was reinforced at the very first Olympic AI Forum, held in November 2025, where athletes, federations, technology partners, and policymakers discussed how AI could support judging, athlete preparation, and the fan experience.

At the 2026 Winter Olympics in Milano-Cortina, the IOC is considering using AI to support judging in figure skating (men’s and women’s singles and pairs), helping judges precisely identify the number of rotations completed during a jump. Its use will also extend to disciplines such as big air, halfpipe, and ski jumping (ski and snowboard events where athletes link jumps and aerial tricks), where automated systems could measure jump height and take-off angles. As these systems move from experimentation to operational use, it becomes essential to examine what could go right… or wrong.

Judged sports and human error

In Olympic sports such as gymnastics and figure skating, which rely on panels of human judges, AI is increasingly presented by international federations and sports governing bodies as a solution to problems of bias, inconsistency, and lack of transparency. Judging officials must assess complex movements performed in a fraction of a second, often from limited viewing angles, for several hours in a row. Post-competition reviews show that unintentional errors and discrepancies between judges are not exceptions.

This became tangible again in 2024, when a judging error involving US gymnast Jordan Chiles at the Paris Olympics sparked major controversy. In the floor final, Chiles initially received a score that placed her fourth. Her coach then filed an inquiry, arguing that a technical element had not been properly credited in the difficulty score. After review, her score was increased by 0.1 points, temporarily placing her in the bronze medal position. However, the Romanian delegation contested the decision, arguing that the US inquiry had been submitted too late – exceeding the one-minute window by four seconds. The episode highlighted the complexity of the rules, how difficult it can be for the public to follow the logic of judging decisions, and the fragility of trust in panels of human judges.

Moreover, fraud has also been observed: many still remember the figure skating judging scandal at the 2002 Salt Lake City Winter Olympics. After the pairs event, allegations emerged that a judge had favoured one duo in exchange for promised support in another competition – revealing vote-trading practices within the judging panel. It is precisely in response to such incidents that AI systems have been developed, notably by Fujitsu in collaboration with the International Gymnastics Federation.

What AI can (and cannot) fix in judging

Our research on AI-assisted judging in artistic gymnastics shows that the issue is not simply whether algorithms are more accurate than humans. Judging errors often stem from the limits of human perception, as well as the speed and complexity of elite performances – making AI appealing. However, our study involving judges, gymnasts, coaches, federations, technology providers, and fans highlights a series of tensions.

AI can be too exact, evaluating routines with a level of precision that exceeds what human bodies can realistically execute. For example, where a human judge visually assesses whether a position is properly held, an AI system can detect that a leg or arm angle deviates by just a few degrees from the ideal position, penalising an athlete for an imperfection invisible to the naked eye.

While AI is often presented as objective, new biases can emerge through the design and implementation of these systems. For instance, an algorithm trained mainly on male performances or dominant styles may unintentionally penalise certain body types.

In addition, AI struggles to account for artistic expression and emotions – elements considered central in sports such as gymnastics and figure skating. Finally, while AI promises greater consistency, maintaining it requires ongoing human oversight to adapt rules and systems as disciplines evolve.

Action sports follow a different logic

Our research shows that these concerns are even more pronounced in action sports such as snowboarding and freestyle skiing. Many of these disciplines were added to the Olympic programme to modernise the Games and attract a younger audience. Yet researchers warn that Olympic inclusion can accelerate commercialisation and standardisation, at the expense of creativity and the identity of these sports.

A defining moment dates back to 2006, when US snowboarder Lindsey Jacobellis lost Olympic gold after performing an acrobatic move – grabbing her board mid-air during a jump – while leading the snowboard cross final. The gesture, celebrated within her sport’s culture, eventually cost her the gold medal at the Olympics. The episode illustrates the tension between the expressive ethos of action sports and institutionalised evaluation.

AI judging trials at the X Games

AI-assisted judging adds new layers to this tension. Earlier research on halfpipe snowboarding had already shown how judging criteria can subtly reshape performance styles over time. Unlike other judged sports, action sports place particular value on style, flow, and risk-taking – elements that are especially difficult to formalise algorithmically.

Yet AI was already tested at the 2025 X Games, notably during the snowboard SuperPipe competitions – a larger version of the halfpipe, with higher walls that enable bigger and more technical jumps. Video cameras tracked each athlete’s movements, while AI analysed the footage to generate an independent performance score. This system was tested alongside human judging, with judges continuing to award official results and medals. However, the trial did not affect official outcomes, and no public comparison has been released regarding how closely AI scores aligned with those of human judges.

Nonetheless, reactions were sharply divided: some welcomed greater consistency and transparency, while others warned that AI systems would not know what to do when an athlete introduces a new trick – something often highly valued by human judges and the crowd.

Beyond judging: training, performance and the fan experience

The influence of AI extends far beyond judging itself. In training, motion tracking and performance analytics increasingly shape technique development and injury prevention, influencing how athletes prepare for competition. At the same time, AI is transforming the fan experience through enhanced replays, biomechanical overlays, and real-time explanations of performances. These tools promise greater transparency, but they also frame how performances are understood – adding more “storytelling” “ around what can be measured, visualised, and compared.

At what cost?

The Olympic AI Agenda’s ambition is to make sport fairer, more transparent, and more engaging. Yet as AI becomes integrated into judging, training, and the fan experience, it also plays a quiet but powerful role in defining what counts as excellence. If elite judges are gradually replaced or sidelined, the effects could cascade downward – reshaping how lower-tier judges are trained, how athletes develop, and how sports evolve over time. The challenge facing Olympic sports is therefore not only technological; it is institutional and cultural: how can we prevent AI from hollowing out the values that give each sport its meaning?

A weekly e-mail in English featuring expertise from scholars and researchers. It provides an introduction to the diversity of research coming out of the continent and considers some of the key issues facing European countries. Get the newsletter!![]()

Willem Standaert, Associate Professor, Université de Liège

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Sunday, 1 February 2026

World’s Most Northern Electric Ferry Now Sailing in Frigid -13°F Temps (-25°C)

Wednesday, 28 January 2026

India’s life sciences leaders scaling AI, digital transformation: Report

Friday, 23 January 2026

Centre sanctions 24 chip design projects in big push to India's semiconductor industry

Tuesday, 6 January 2026

Hyundai Motor chief vows AI-driven growth, faster decision-making

Sunday, 21 December 2025

India emerges as world’s 3rd most competitive AI power

Monday, 8 December 2025

Tongue-Zapping Device Does More in 6 Months Than 4 Years of Normal Stroke Rehabilitation

Friday, 14 November 2025

Women at forefront of technology, leading with vision: Industry leaders

Monday, 10 November 2025

Driverless Electric Bus Eases Driver Shortages and Congestion In Madrid During Maiden Service

Friday, 17 October 2025

Telco transformation and the AI efficiency imperative

Wednesday, 8 October 2025

How safe is your face? The pros and cons of having facial recognition everywhere

Joanne Orlando, Western Sydney University

Walk into a shop, board a plane, log into your bank, or scroll through your social media feed, and chances are you might be asked to scan your face. Facial recognition and other kinds of face-based biometric technology are becoming an increasingly common form of identification.

The technology is promoted as quick, convenient and secure – but at the same time it has raised alarm over privacy violations. For instance, major retailers such as Kmart have been found to have broken the law by using the technology without customer consent.

So are we seeing a dangerous technological overreach or the future of security? And what does it mean for families, especially when even children are expected to prove their identity with nothing more than their face?

The two sides of facial recognition

Facial recognition tech is marketed as the height of seamless convenience.

Nowhere is this clearer than in the travel industry, where airlines such as Qantas tout facial recognition as the key to a smoother journey. Forget fumbling for passports and boarding passes – just scan your face and you’re away.

In contrast, when big retailers such as Kmart and Bunnings were found to be scanning customers’ faces without permission, regulators stepped in and the backlash was swift. Here, the same technology is not seen as a convenience but as a serious breach of trust.

Things get even murkier when it comes to children. Due to new government legislation, social media platforms may well introduce face-based age verification technology, framing it as a way to keep kids safe online.

At the same time, schools are trialling facial recognition for everything from classroom entry to paying in the cafeteria.

Yet concerns about data misuse remain. In one incident, Microsoft was accused of mishandling children’s biometric data.

For children, facial recognition is quietly becoming the default, despite very real risks.

A face is forever

Facial recognition technology works by mapping someone’s unique features and comparing them against a database of stored faces. Unlike passive CCTV cameras, it doesn’t just record, it actively identifies and categorises people.

This may feel similar to earlier identity technologies. Think of the check-in QR code systems that quickly sprung up at shops, cafes and airports during the COVID pandemic.

Facial recognition may be on a similar path of rapid adoption. However, there is a crucial difference: where a QR code can be removed or an account deleted, your face cannot.

Why these developments matter

Permanence is a big issue for facial recognition. Once your – or your child’s – facial scan is stored, it can stay in a database forever.

If the database is hacked, that identity is compromised. In a world where banks and tech platforms may increasingly rely on facial recognition for access, the stakes are very high.

What’s more, the technology is not foolproof. Mis-identifying people is a real problem.

Age-estimating systems are also often inaccurate. One 17-year-old might easily be classified as a child, while another passes as an adult. This may restrict their access to information or place them in the wrong digital space.

A lifetime of consequences

These risks aren’t just hypothetical. They already affect lives. Imagine being wrongly placed on a watchlist because of a facial recognition error, leading to delays and interrogations every time you travel.

Or consider how stolen facial data could be used for identity theft, with perpetrators gaining access to accounts and services.

In the future, your face could even influence insurance or loan approvals, with algorithms drawing conclusions about your health or reliability based on photo or video.

Facial recognition does have some clear benefits, such as helping law enforcement identify suspects quickly in crowded spaces and providing convenient access to secure areas.

But for children, the risks of misuse and error stretch across a lifetime.

So, good or bad?

As it stands, facial recognition would seem to carry more risks than rewards. In a world rife with scams and hacks, we can replace a stolen passport or drivers’ licence, but we can’t change our face.

The question we need to answer is where we draw the line between reckless implementation and mandatory use. Are we prepared to accept the consequences of the rapid adoption of this technology?

Security and convenience are important, but they are not the only values at stake. Until robust, enforceable rules around safety, privacy and fairness are firmly established, we should proceed with caution.

So next time you’re asked to scan your face, don’t just accept it blindly. Ask: why is this necessary? And do the benefits truly outweigh the risks – for me, and for everyone else involved?![]()

Joanne Orlando, Researcher, Digital Wellbeing, Western Sydney University

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Monday, 22 September 2025

IIVR helps grow multiple vegetables in one plant

Monday, 15 September 2025

Semiconductor product design leadership forum to boost innovation launched in India

New Delhi, (IANS) In a bid to position India as a global leader in chip design, intellectual property (IP) creation and high-value innovation, a Semiconductor Product Design Leadership Forum was launched here on Monday.

Friday, 12 September 2025

TinyML: The Small Technology Tackling the Biggest Climate Challenge

Thursday, 14 August 2025

Global 5G Modem Market is projected to grow at a CAGR of 12.45% from 2024 to 2032, reaching a value of USD 5.6 billion by the end of the forecast period

Thursday, 7 August 2025

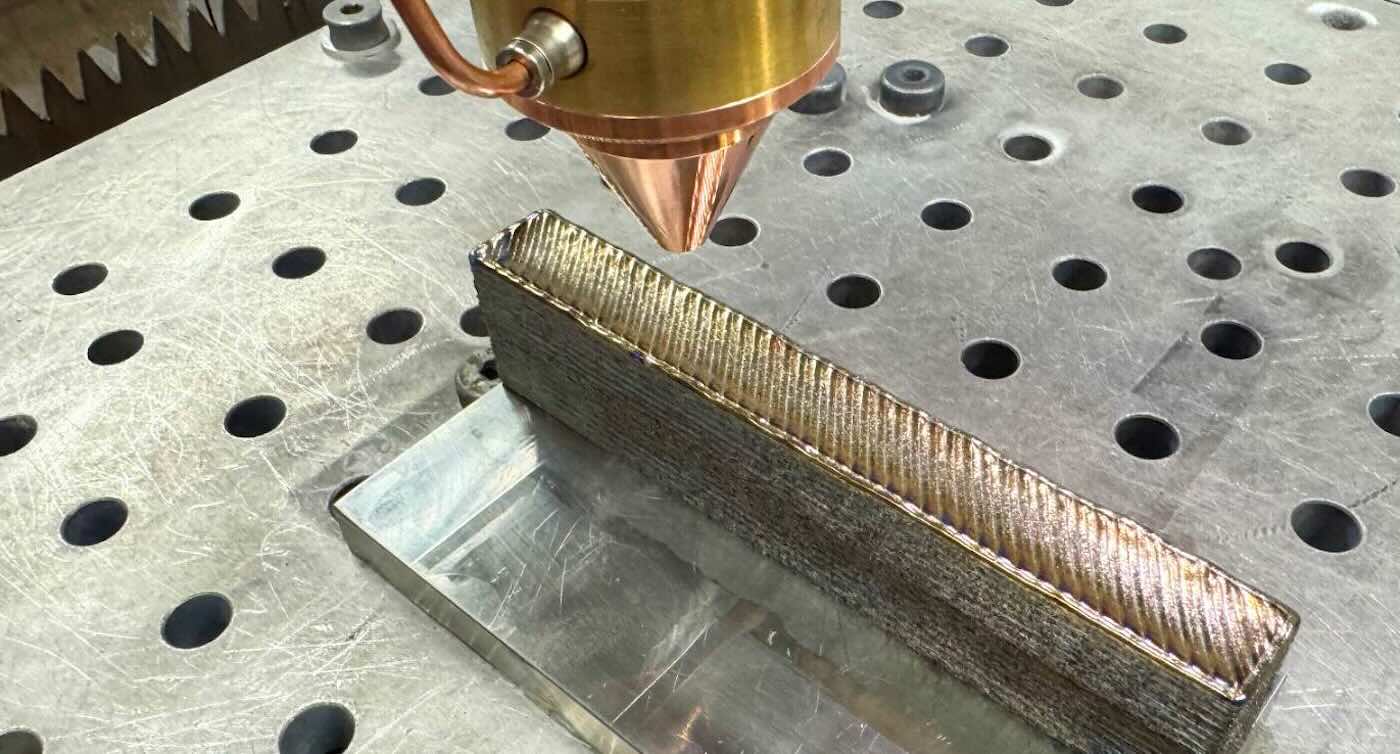

New 3D-Printed Titanium Alloy is Stronger Than the Standard – Yet 30% Cheaper

Ryan Brooke inspects a sample of the new titanium – Photo by Michael Quin (RMIT University)

Ryan Brooke inspects a sample of the new titanium – Photo by Michael Quin (RMIT University) Photo credit: RMIT

Photo credit: RMITTuesday, 5 August 2025

India to host AI impact summit 2026, leading global dialogue on democratising AI

New Delhi, (IANS): India is set to host the AI Impact Summit in February 2026, reinforcing its commitment to democratising Artificial Intelligence (AI) for the public good, the Parliament was informed on Wednesday.

Thursday, 31 July 2025

5G Advanced powers world’s largest fleet of driverless coal mining trucks in China